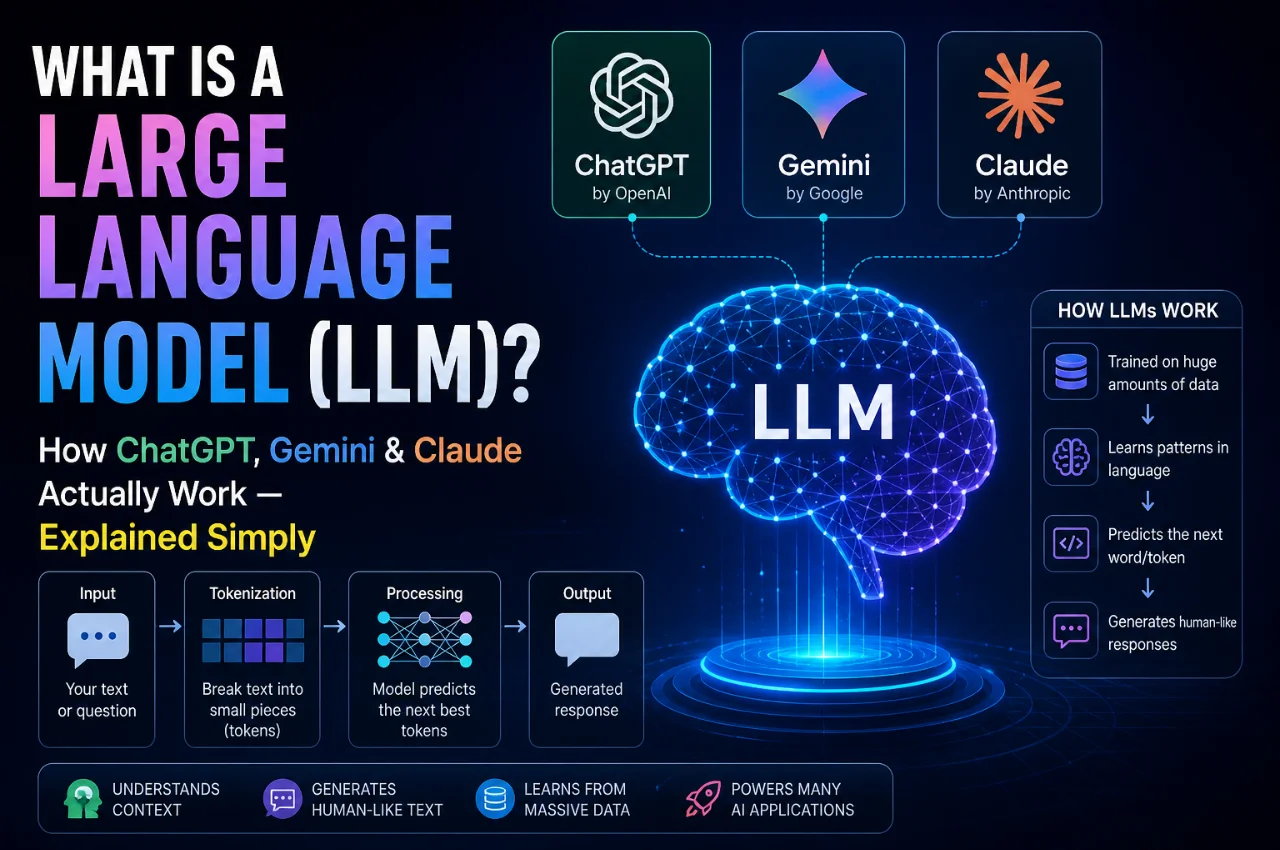

You’ve used ChatGPT to write an email. You’ve asked Gemini a question on your phone. You’ve heard about Claude helping people summarize long documents. But have you ever stopped and wondered — what is actually happening inside these AI tools? What are they really doing when they give you an answer?

The technology behind all of them has a name: Large Language Model, or LLM. And understanding what an LLM is — even at a basic level — will completely change how you think about AI, how you use these tools, and why they sometimes get things wrong.

This guide explains everything from scratch, in plain simple language. No engineering degree required.

What is a Large Language Model (LLM)?

A Large Language Model is an artificial intelligence system that has been trained on enormous amounts of text — billions of words from books, websites, articles, research papers, code, conversations, and more — to understand and generate human language.

The word “large” is doing a lot of work in that name. These models are not large in the way a big file is large. They are large in terms of their internal complexity — the number of parameters, or adjustable settings, that the model tunes during training. Modern LLMs like ChatGPT, Gemini, and Claude have hundreds of billions of these parameters. This scale is what gives them their remarkable ability to write, reason, explain, translate, and create.

At their core, LLMs work as giant statistical prediction machines. Every time you ask ChatGPT a question, the model is doing one thing repeatedly — predicting the most statistically likely next word based on everything it was trained on. That’s the fundamental trick. And yet, when scaled up to hundreds of billions of parameters and trained on the combined text of the internet, this simple prediction task produces something that can feel remarkably intelligent.

Think of it this way. If you have read millions of books, articles, and conversations, you develop a deep intuition for how language works — what words follow what other words, how ideas connect, how problems are solved, how questions are answered. An LLM learns the same intuition, but from far more text than any human could read in a thousand lifetimes.

The History — How Did We Get Here?

LLMs didn’t appear overnight. Understanding their history helps you appreciate why they’re such a big deal.

For most of computer history, AI systems were built around explicit rules. A chatbot would look for keywords in your message and return a pre-written response. Type “refund” and it would take you to a returns page. Type anything unusual and it would be completely lost. These systems weren’t intelligent — they were sophisticated if-then-else programs.

The real breakthrough came in 2017 when researchers at Google published a paper titled “Attention is All You Need.” This paper introduced a new neural network architecture called the Transformer, and it changed everything. The Transformer gave AI models the ability to understand context — to grasp not just individual words, but the relationships between words across long passages of text. This was the architectural foundation that made modern LLMs possible.

Building on this, OpenAI released GPT-3 in 2020 — a model so capable that it shocked the AI research community. But it was still mostly a research curiosity. The real turning point came in late 2022 when OpenAI launched ChatGPT — a version of their model fine-tuned to hold natural conversations with ordinary people. Within two months, it reached 100 million users, making it the fastest-growing consumer application in history. The AI era had truly begun.

Since then, progress has been breathtaking. Google launched Gemini. Anthropic launched Claude. Meta released Llama as an open-source model. And the capabilities of these systems have grown dramatically with each new generation.

How Does an LLM Actually Work? Step by Step

Let’s go behind the scenes and understand the process — from raw data to the AI assistant you talk to today.

Step 1 — Data Collection

Everything starts with data. An LLM is trained on an enormous dataset of text from across the internet and beyond — websites, books, scientific papers, Wikipedia, programming code, social media discussions, news articles, and much more. We’re talking about trillions of words. This dataset becomes the model’s entire knowledge of the world and of language.

Step 2 — Tokenization

Before the model can learn from text, the text needs to be broken down into smaller units called tokens. Tokens are not always full words — they can be parts of words, punctuation marks, spaces, or single characters. For example, the word “unhappiness” might be broken into tokens like “un,” “happi,” and “ness.” The entire conversation you have with an AI is processed as a sequence of these tokens. Modern LLMs can handle context windows of hundreds of thousands of tokens in a single session — meaning they can “remember” and process very long documents or conversations at once.

Step 3 — Pre-Training

During pre-training, the model processes the entire training dataset and learns one fundamental task — predicting the next token in a sequence. It sees a piece of text with the last word hidden and tries to predict what that word should be. It gets it wrong, the error is calculated, and the model adjusts its billions of parameters slightly to do better next time. This process is repeated trillions of times across the entire dataset.

The architecture that makes this work is called the Transformer, specifically a mechanism within it called attention. Attention allows the model to look at every other token in the input simultaneously and decide which ones are most relevant for predicting the next word. This is why LLMs can understand complex, nuanced sentences — they’re not reading left to right one word at a time. They’re considering the entire context at once.

By the end of pre-training, the model has internalized an extraordinary amount of knowledge about language, facts, logic, writing styles, and reasoning patterns — all purely from predicting text.

Step 4 — Fine-Tuning and RLHF

A pre-trained model is powerful but raw. It generates text that continues whatever pattern it sees, which isn’t always useful as a helpful assistant. This is where fine-tuning comes in.

Companies like OpenAI, Anthropic, and Google take their pre-trained models and refine them specifically to be helpful, honest, and harmless in conversation. A key technique here is called RLHF — Reinforcement Learning from Human Feedback. Human trainers review thousands of model responses and rate them — which responses are more helpful, which are more accurate, which are better written. The model then learns to generate responses more like the highly-rated ones and less like the poorly-rated ones.

This is the step that turns a raw text prediction machine into the polished conversational AI assistants that millions of people use today. ChatGPT, Gemini, and Claude are all products of this fine-tuning process, applied on top of their respective underlying models.

The Main Players — ChatGPT, Gemini, and Claude Compared

Now that you understand how LLMs work, let’s look at the three you’re most likely to encounter and what makes each one distinct.

ChatGPT — Made by OpenAI

ChatGPT is the one that started the mainstream AI revolution. Launched in November 2022, it was the first LLM product to reach ordinary people at massive scale. The underlying model is called GPT — which stands for Generative Pre-trained Transformer — and OpenAI has released multiple generations of it.

ChatGPT is known for its general-purpose intelligence — strong at writing, summarizing, brainstorming, explaining concepts, and coding. It also supports image generation through a tool called DALL-E and can browse the internet for current information. It has the largest user base and the most extensive third-party integration of any LLM platform, with plugins and connections to tools like Slack, Salesforce, and many more.

Gemini — Made by Google DeepMind

Gemini is Google’s answer to ChatGPT, and it brings a very significant advantage — deep integration with Google’s own products and services. Gemini can search the web in real time using Google Search, giving it access to current information in a way that’s tightly integrated with the world’s most powerful search engine.

One of Gemini’s standout features is its multimodal capability — it can process and understand text, images, audio, and video within the same conversation. It also has an extremely large context window of up to one million tokens, meaning it can process entire books or large codebases in a single session.

Claude — Made by Anthropic

Claude is built by Anthropic, a company founded specifically with the goal of making AI safer and more aligned with human values. Claude is widely recognized for its ability to handle very long, nuanced conversations and documents, its careful and thoughtful responses, and its strong focus on accuracy and honesty.

What makes Claude particularly distinctive is Anthropic’s emphasis on safety research. Claude is designed to be less likely to generate harmful, misleading, or manipulative content, and it’s built to acknowledge uncertainty rather than confidently stating things it doesn’t know. This makes it especially popular for professional tasks like legal document analysis, research, and complex writing.

Why Do AI Chatbots Sometimes Get Things Wrong?

This is one of the most important things to understand about LLMs — and one that most people don’t fully grasp.

LLMs do not think in the way humans think. They do not have opinions, feelings, or consciousness. They do not look things up in a database when you ask a question. They generate responses by predicting what text is most likely to follow the prompt you gave them, based on patterns learned during training.

This means that sometimes the model generates text that sounds extremely confident and well-structured but is factually incorrect. This is called hallucination — and it’s not a bug that can simply be fixed. It’s a fundamental characteristic of how these systems work.

An LLM hallucinating is like a person who has read millions of books but has no ability to verify what they’re saying in real time — they’ll construct a plausible-sounding answer based on patterns, even when the factual basis for that answer doesn’t exist.

This is why you should never blindly trust an LLM’s output for important factual claims, medical or legal questions, or anything that requires verified accuracy. Always cross-check significant information with authoritative sources.

What Can LLMs Actually Do? Real-World Uses

LLMs have proven genuinely useful across a wide range of tasks. Here are the most valuable applications that people use them for today.

Writing and content creation — Drafting emails, blog posts, social media captions, product descriptions, cover letters, and more. LLMs are remarkably capable writing assistants that can match different tones and styles.

Summarization — Give an LLM a long article, research paper, legal document, or meeting transcript and it can produce a concise, accurate summary in seconds. This alone saves professionals enormous amounts of time.

Question answering and research — LLMs can explain complex topics in simple language, answer follow-up questions, and help you understand subjects you’re unfamiliar with. Think of it as having a knowledgeable conversation partner available at any time.

Coding assistance — Developers use LLMs to write code, debug errors, explain what existing code does, and suggest improvements. Tools like GitHub Copilot, built on LLM technology, have fundamentally changed how software is written.

Translation — LLMs are highly capable at translating text between languages, often producing more natural-sounding results than traditional translation tools.

Creative work — Writing stories, generating ideas, brainstorming concepts, creating scripts, composing poems — LLMs are powerful creative collaborators for writers, marketers, and designers.

Customer support — Businesses use LLMs to power intelligent chatbots that can handle complex customer queries, understand context, and provide accurate answers — far beyond the keyword-matching bots of the past.

What LLMs Cannot Do — Important Limitations

Understanding what LLMs cannot do is just as important as knowing what they can.

They don’t have real-time knowledge unless specifically connected to live search. Their training data has a cutoff date, so they may not know about recent events.

They cannot truly reason or think logically in the way humans do. They can simulate reasoning patterns they’ve seen in training data, but they don’t have genuine logical understanding.

They cannot verify their own outputs. They have no mechanism to check whether what they’re saying is true before saying it.

They have no memory between conversations by default. Each new conversation starts fresh — they don’t remember who you are or what you discussed before unless you tell them again or the product is specifically built with memory features.

They cannot take actions in the real world on their own — unless they are configured as AI agents connected to external tools and systems.

Key Terms — Quick Reference

Here are the most important LLM-related terms explained simply, so you’re never lost when reading about AI.

LLM — Large Language Model. The core AI technology behind ChatGPT, Claude, Gemini, and most modern AI tools.

Token — The small units of text that an LLM processes. Not always full words — can be parts of words or characters.

Parameters — The billions of numerical settings inside an LLM that determine how it processes and generates text. More parameters generally means more capability.

Transformer — The neural network architecture that powers all modern LLMs. Introduced by Google in 2017.

Attention Mechanism — The key innovation inside the Transformer that lets the model consider all parts of the input simultaneously when generating a response.

Context Window — The maximum amount of text an LLM can process in a single session. Think of it as the model’s working memory.

Hallucination — When an LLM generates text that sounds confident and plausible but is factually incorrect. A fundamental limitation of current LLM technology.

RLHF — Reinforcement Learning from Human Feedback. The technique used to fine-tune LLMs to be helpful, safe, and conversational.

Prompt — The input or question you give to an LLM. The quality of your prompt significantly affects the quality of the response.

Fine-Tuning — The process of taking a pre-trained LLM and training it further on specific data or with specific human feedback to make it better at particular tasks.

Multimodal — An LLM that can process multiple types of input, not just text — such as images, audio, and video. Gemini is a prominent example.

AI Agent — An LLM connected to external tools (like web search, code execution, or APIs) that can take actions and complete multi-step tasks autonomously.

Frequently Asked Questions

Is ChatGPT the same as an LLM?

Not exactly. ChatGPT is a product built on top of an LLM called GPT. The LLM is the underlying AI engine. ChatGPT is the interface and application layer built around it. Similarly, Claude is a product built on Anthropic’s LLM, and Gemini is a product built on Google DeepMind’s LLM.

Do LLMs actually understand what they’re saying?

This is a genuinely complex question that even AI researchers debate. LLMs process language and generate responses with extraordinary sophistication, but they don’t have understanding in the human sense — they don’t have experiences, intentions, or consciousness. They are extremely powerful pattern-matching and prediction systems.

How is an LLM different from a regular search engine?

A search engine like Google finds existing pages that match your query and returns links. An LLM generates a brand new response to your query in natural language, drawing on patterns from its training. Search engines retrieve; LLMs generate. Many modern AI products, including Gemini, combine both — using search to retrieve current information and then using an LLM to present it naturally.

Can an LLM learn from my conversations?

In most consumer products, no — your conversations are not used to update the model in real time. The underlying model remains the same for all users. However, many products offer a “memory” feature that stores facts about you specifically to personalize future conversations, without changing the base model.

Why does the same question sometimes get different answers from an LLM?

LLMs have a setting called temperature that controls how much randomness is in their responses. At higher temperature settings, the model introduces more variation — choosing among multiple plausible next tokens rather than always picking the single most likely one. This is what makes LLMs feel more creative and natural, but also means responses can vary.

Are LLMs dangerous?

Like any powerful technology, LLMs have risks. They can spread misinformation through hallucination, can be misused to generate harmful or deceptive content, and raise serious questions about privacy, employment, and intellectual property. Responsible AI companies like Anthropic, which makes Claude, invest heavily in safety research to mitigate these risks. Being an informed user — understanding both the capabilities and the limitations — is the best protection.

Final Thoughts

Large Language Models are the most significant technological development of this decade. They are already reshaping how people write, learn, work, code, and create — and we are still in the very early stages of what this technology will become.

Understanding what an LLM is — how it’s trained, how it generates responses, why it sometimes makes mistakes, and what each major AI tool is best at — puts you in a far stronger position to use these tools effectively and responsibly.

ChatGPT, Gemini, and Claude are not magic. They are not conscious. They are not infallible. But they are remarkably powerful tools built on genuinely revolutionary technology. And now you understand exactly how they work.

The best way to learn is to use them. Ask questions, experiment, push them to their limits, and notice where they succeed and where they fall short. The more you engage with these tools with informed curiosity, the more value you’ll get from them.