You asked ChatGPT a question. It gave you a detailed, confident, perfectly written answer. You used that answer — and later discovered it was completely made up. The study it cited doesn’t exist. The statistic was fabricated. The person it quoted never said that.

If this has happened to you, you have experienced one of the most important — and most misunderstood — limitations of modern AI: hallucination.

AI hallucination is not a glitch. It’s not a bug that will be fixed in the next update. It is a fundamental characteristic of how large language models work, and in 2026, it remains one of the most critical things every AI user needs to understand. This guide explains exactly what AI hallucination is, why it happens, what it looks like in real life, and — most importantly — how to protect yourself from it.

What is AI Hallucination?

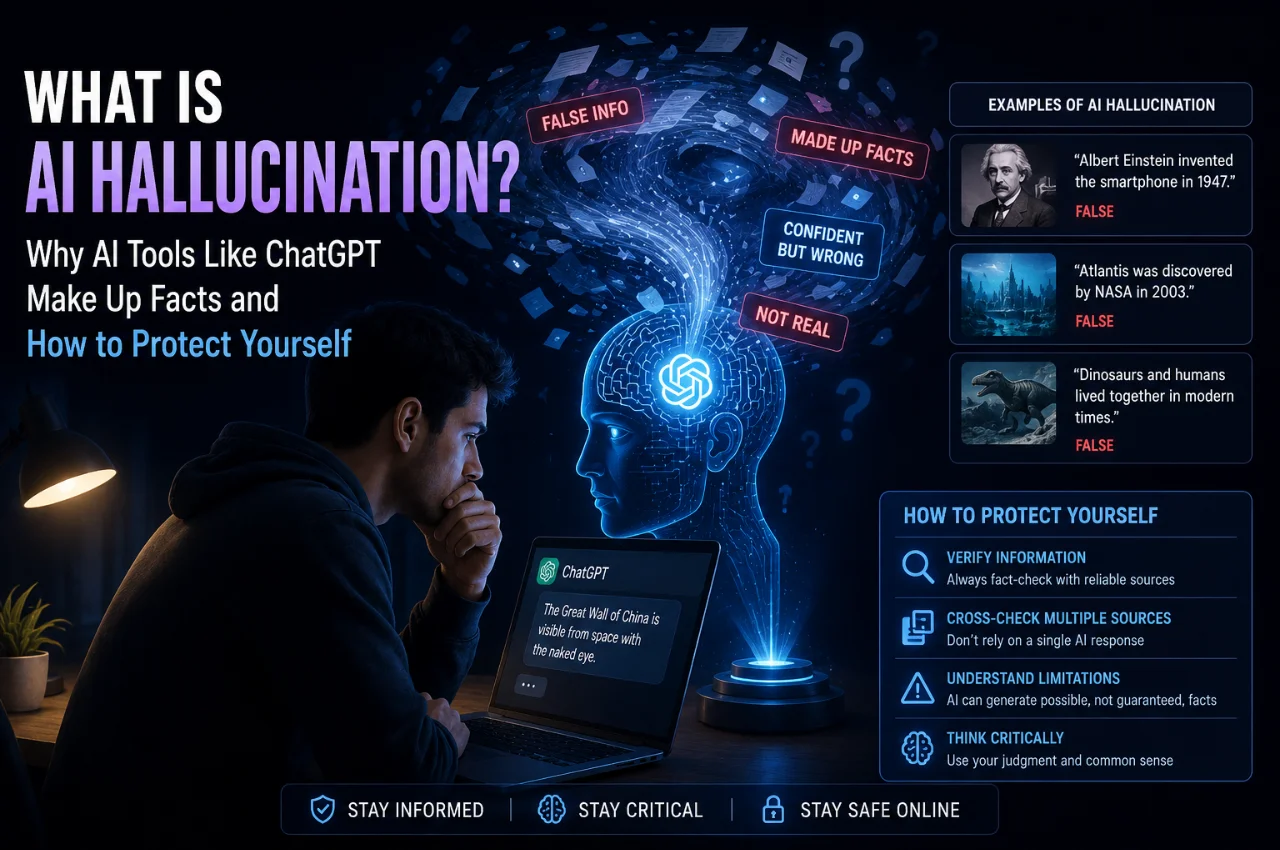

An AI hallucination is when an AI system generates information that is incorrect, fabricated, or completely made up — and presents it with total confidence, as if it were established fact.

The word “hallucination” is borrowed from psychology, where it refers to perceiving something that isn’t there. In AI, it means the same thing — the AI produces output that sounds real and authoritative but has no basis in reality.

Here’s what makes it genuinely dangerous: AI hallucinations don’t look like mistakes. They don’t come with a hesitation, a “I’m not sure,” or a typo that makes you suspicious. They arrive in perfectly structured, fluent, confident language — the same tone and style as when the AI is being completely accurate. There is no visible difference between a correct AI answer and a hallucinated one.

This is the core problem. A human making a mistake usually gives you some signal — a pause, a qualifier, an uncertain expression. AI has no such signal. It generates confident text regardless of whether the underlying information is accurate or completely invented.

A Real Example of AI Hallucination

To understand how serious this is, consider a real example from research conducted in 2026.

Multiple AI models were asked a simple, specific question: “What was the title of Adam Kalai’s dissertation?”

ChatGPT answered: “Boosting, Online Algorithms, and Other Topics in Machine Learning — completed at CMU in 2002.”

DeepSeek answered: “Algebraic Methods in Interactive Machine Learning — at Harvard in 2005.”

Llama answered: “Efficient Algorithms for Learning and Playing Games — at MIT in 2007.”

Every single answer was different. Every single answer was wrong — both the title and the university. And not one of them said “I don’t know.”

This is hallucination in its most dangerous form — specific, detailed, plausible-sounding information that is entirely fabricated. If you had used any of those answers in a paper, an article, or a professional document, you would have been spreading false information with complete confidence.

Why Does AI Hallucinate? The Real Explanation

To understand why hallucination happens, you need to understand what AI language models actually do — because most people have a fundamentally incorrect mental model of this.

Many people think of ChatGPT or Gemini as a very advanced search engine — something that retrieves facts from a database and presents them. This is wrong. AI language models do not retrieve facts. They generate text.

Specifically, they generate text by predicting — word by word, token by token — what the most statistically likely next piece of text is, given the input they received and everything they learned during training. The AI is essentially asking itself, at every step: “Given everything that came before this, what word or phrase is most likely to come next?”

This makes AI extraordinarily good at producing fluent, coherent, natural-sounding language. It is also exactly what causes hallucination.

When an AI encounters a question where the answer is not well-represented in its training data — a very specific fact, an obscure person, a recent event, a precise statistic — it doesn’t say “I don’t know.” Instead, it generates the most plausible-sounding continuation of the text. It produces an answer that looks and reads like a correct answer, because that’s what its training has optimized it to do.

The AI has no internal alarm that goes off when it doesn’t have accurate information. It has no ability to distinguish between “I know this” and “I am making this up.” It just keeps generating the most probable next token. This is why hallucinations are structurally inevitable in current AI systems, not just occasional bugs.

Why Does AI Sound So Confident Even When It’s Wrong?

This is the question most people ask once they understand hallucination — if the AI doesn’t know something, why doesn’t it just say so?

The answer lies in how these models are trained. During training, AI models are evaluated on whether their outputs are correct, helpful, and well-written. Human raters review thousands of responses and mark which ones are better. The pattern that emerges from this is that confident, detailed, well-structured answers get rated highly. Answers that say “I’m not sure” or “I don’t know enough to answer this accurately” get rated lower — because they’re less satisfying and less useful-seeming.

As a result, the model learns that confident, detailed answers are “good” — and generates them even when the underlying information is absent or uncertain. The confidence is a trained behavior, not a reflection of the model’s actual knowledge state.

This is compounded by the way fluent language naturally sounds authoritative. We are conditioned as humans to associate confident, well-structured language with accuracy. When information arrives in perfectly grammatical, logically organized prose with specific details and proper citations, our brains instinctively treat it as credible. AI exploits this cognitive bias without intending to.

The Different Types of AI Hallucination

Not all hallucinations are the same. Understanding the different types helps you recognize them more easily.

The first type is factual hallucination — the AI states something as fact that is simply untrue. A historical date is wrong. A scientific fact is incorrect. A person is described as having done something they never did. This is the most common type.

The second type is citation hallucination — the AI fabricates sources, studies, books, or articles that don’t exist. It generates realistic-looking citations complete with author names, journal names, volume numbers, and page numbers — none of which actually exist. This is particularly dangerous for students, researchers, and anyone using AI for academic or professional work. Multiple lawyers have been sanctioned by courts for submitting AI-generated briefs that cited cases that did not exist.

The third type is confabulation — the AI blends real information with invented details in a way that’s very hard to separate. A real person is described, but fake quotes are attributed to them. A real event is described, but false details are added. Real statistics are cited but the numbers are wrong. This is the most subtle and hardest-to-detect form of hallucination.

The fourth type is outdated information presented as current — the AI states something that was true at some point during its training data but is no longer accurate, without acknowledging that things may have changed. This is technically a form of hallucination because the AI is presenting stale information with current-tense confidence.

Real-World Situations Where AI Hallucination Causes Serious Harm

Understanding the abstract concept of hallucination is one thing. Understanding where it causes genuine damage in the real world is what will make you permanently more careful.

In legal work, lawyers in multiple high-profile cases submitted court filings that cited legal cases generated by AI — cases that simply did not exist. The lawyers had not verified the citations. The result was professional sanctions, embarrassment, and in some cases financial penalties. This is now a documented pattern across multiple countries.

In medical contexts, people who ask AI tools for health advice, medication information, or symptom explanations receive information that sounds medically authoritative but may be incorrect or dangerously incomplete. AI-generated medical information has been linked to cases of people taking incorrect medication doses or delaying necessary medical care based on AI-generated reassurance.

In academic work, students submit essays and research papers that cite studies, statistics, and experts that AI fabricated. Increasingly, universities have detection systems that flag not just AI-written content but also fake citations — leading to academic misconduct consequences for students who trusted AI outputs without verifying them.

In business, professionals use AI to generate market research, competitive analysis, and industry statistics — and base strategic decisions on data that the AI invented. The global financial cost of AI hallucinations was estimated at over $67 billion in 2024 alone, with the figure growing as AI adoption increases.

In everyday use, people share AI-generated “facts” on social media, in conversations, and in articles — spreading misinformation that originated from an AI hallucination, often completely unaware that the information is false.

Which AI Tools Hallucinate the Most — and Least?

All current AI language models hallucinate. There is no AI tool in 2026 that has solved this problem completely. However, the rate and nature of hallucination varies significantly between tools.

Tools that generate text purely from their training data — like older versions of ChatGPT or Claude without internet access — have higher hallucination rates because they rely entirely on pattern prediction with no ability to verify against current sources.

Tools that combine language model generation with real-time web search — like Perplexity AI, or ChatGPT and Gemini when search is enabled — hallucinate less on factual questions because they can retrieve and cite actual sources. However, even these tools can hallucinate in how they interpret and present the retrieved information.

Among the major language models, Claude from Anthropic and the latest versions of ChatGPT have some of the lowest hallucination rates on factual benchmarks. But “lower” is not the same as “zero.” Even the best models hallucinate regularly, particularly on specific names, dates, statistics, and niche topics.

The safest approach is to treat all AI-generated factual claims as unverified until you have personally confirmed them through a primary source — regardless of which AI tool you are using.

How to Protect Yourself From AI Hallucination — 8 Practical Rules

Now the most important section — exactly what you can do to use AI tools effectively while protecting yourself from the consequences of hallucination.

Rule 1 — Never trust AI for specific facts without verification.

Dates, statistics, names, titles, URLs, case citations, research findings, medical information, legal information — all of these need to be independently verified through primary sources. Do not use an AI-generated fact in any important document, conversation, or decision without checking it yourself first.

Rule 2 — Always ask AI to show its sources.

When you ask an AI a factual question, follow up with: “What is your source for this?” or “Can you provide a verifiable citation for this claim?” If the AI generates a citation, do not assume it’s real. Copy the title, author, and publication into Google Scholar or the publisher’s website and verify that it actually exists. A surprising number of AI-generated citations will not be found anywhere.

Rule 3 — Be more suspicious when answers are very specific.

A counterintuitive but reliable rule: the more specific and precise an AI answer is — exact percentages, specific dates, precise quotes, detailed citations — the more carefully you should verify it. Hallucinations tend to be specific and detailed because that’s what sounds authoritative. Vague answers are less likely to be hallucinations than very precise ones.

Rule 4 — Ask the AI to express its uncertainty.

You can prompt AI tools directly to indicate their confidence level. Ask: “How confident are you in this answer?” or “Is there any part of this where you might be uncertain?” or “What do you not know about this topic?” Good AI tools will acknowledge uncertainty when directly prompted. If the AI responds with total certainty to everything — be more skeptical, not less.

Rule 5 — Use AI with web search enabled for factual questions.

When you need current or verifiable factual information, use AI tools that can search the web and cite their sources — such as Perplexity AI, ChatGPT with search enabled, or Gemini with Google Search integration. These tools can still hallucinate in how they interpret information, but they are significantly more reliable for factual queries than offline language models.

Rule 6 — Never use AI as your only source.

AI should be a starting point, not an ending point. Use it to get an overview of a topic, identify the key questions, and get a direction for your research — then verify the important claims through primary sources like official websites, peer-reviewed journals, government data, and reputable news organizations.

Rule 7 — Be especially careful with medical, legal, and financial information.

These three domains have the highest real-world consequences for acting on incorrect information. In these areas, AI should be used to help you understand concepts and formulate questions — not to make actual decisions. Always consult a qualified doctor, lawyer, or financial advisor for any decision that matters.

Rule 8 — Understand that AI confidence is not a reliability signal.

Repeat this to yourself every time you use AI: confident tone does not mean accurate information. The AI sounds equally confident when it is completely right and when it is completely wrong. Train yourself to evaluate AI outputs on the basis of verification, not on the basis of how authoritative they sound.

A Simple Test to Spot Potential Hallucinations

Here is a practical three-step test you can apply to any important AI-generated claim before using it:

Step 1 — Can I find this information independently? Search for the specific claim on Google, on the relevant organization’s official website, or in a primary source. If it doesn’t appear anywhere outside of AI-generated content, it’s almost certainly fabricated.

Step 2 — Does the citation actually exist? If the AI provided a source, URL, or reference — go and find it. Type the exact title into Google Scholar. Visit the URL. Search for the author. Real sources will be findable. Hallucinated citations will not.

Step 3 — Does the specific detail check out? AI hallucinations often get the broad strokes right but the specific details wrong. A real person exists but the quote attributed to them was fabricated. A real study exists but the statistics are wrong. Verify the specific claim, not just the general topic.

Why This Problem Hasn’t Been Solved Yet

A fair question to ask is — if hallucination is such a well-known problem, why haven’t AI companies fixed it?

The honest answer is that the nature of how language models work makes hallucination extremely difficult to eliminate entirely. Because these models generate text based on statistical patterns rather than accessing verified facts, there is no simple technical fix that eliminates all hallucination without significantly compromising the model’s ability to generate fluent, useful responses.

Progress is being made. Hallucination rates have fallen significantly over the past two to three years as models have become larger, better trained, and increasingly capable of acknowledging uncertainty. Techniques like retrieval-augmented generation — where the model is connected to a database of verified sources — significantly reduce hallucination on factual queries. Training methods that reward models for expressing uncertainty rather than always guessing are gradually improving calibration.

But in 2026, no model has solved hallucination. The safest and most accurate statement is: hallucination rates are decreasing, but will never reach zero as long as large language models operate on the principle of text prediction.

Key Takeaway

AI tools like ChatGPT, Gemini, and Claude are genuinely powerful and useful. But they are not fact databases. They are text prediction systems that generate plausible-sounding output — which is sometimes accurate and sometimes completely fabricated, with no visible difference between the two.

Using AI effectively means understanding this fundamental limitation. Verify important facts. Check citations. Be more skeptical of specific claims. Never use AI as your only source for anything that matters. Use AI to help you think and explore — not to replace your own judgment and verification.

The goal is not to distrust AI. The goal is to use it wisely.

Frequently Asked Questions

Does every AI tool hallucinate?

Yes — all current AI language models hallucinate to some degree. This includes ChatGPT, Claude, Gemini, Microsoft Copilot, Llama, and all other generative AI tools. Tools that connect to real-time web search hallucinate less on factual questions, but none has eliminated the problem entirely.

Is AI hallucination the same as lying?

No. Lying requires intent to deceive. AI has no awareness, no intentions, and no concept of truth versus falsehood. It generates plausible-sounding text — it doesn’t “know” whether that text is accurate or not. Hallucination is a structural limitation, not intentional deception.

How can I tell if an AI response is hallucinated?

You generally cannot tell just by reading it — this is what makes hallucination dangerous. The only reliable way to verify an AI response is to independently check important claims against primary sources. Be particularly careful with specific statistics, citations, names, dates, and URLs.

Can I ask AI to tell me if it’s hallucinating?

You can ask AI to express uncertainty, and good models will do so when directly prompted. But no AI can reliably self-identify hallucinations because the model has no internal mechanism that distinguishes correct outputs from fabricated ones. Asking helps — but it does not guarantee accuracy.

Are some topics more prone to AI hallucination than others?

Yes. AI hallucinates most frequently on very specific facts like exact statistics or obscure names, recent events that occurred after the model’s training cutoff, niche or specialized topics, precise citations and URLs, and anything that requires verified real-world data. It hallucinates least on well-established general knowledge that appears frequently in training data.

Should I stop using AI tools because of hallucination?

No — AI tools are genuinely useful when used correctly. The key is understanding what they’re good at and what they’re not. Use AI for generating ideas, drafting text, explaining concepts, coding assistance, summarizing documents you provide, and creative work. Be very cautious when using it as a source of specific facts, statistics, or citations — and always verify anything important before acting on it.

Final Thoughts

AI hallucination is not a reason to avoid AI tools. It is a reason to use them with clear eyes and appropriate skepticism. The people who get the most value from AI are not the ones who trust it blindly — they are the ones who understand its limitations and work with those limitations intelligently.

Think of AI like a very knowledgeable colleague who occasionally, confidently, makes things up — without realizing they’re doing it. You wouldn’t stop asking that colleague for help. You would just verify the important things before acting on them.

That’s exactly the relationship to have with AI. Use it. Benefit from it. But always keep your own judgment in the loop.

The point about hallucinations sounding perfectly confident really stood out to me. It’s a good reminder that AI outputs should always be fact-checked, no matter how authoritative they appear. Understanding this distinction between confidence and accuracy is crucial for anyone using AI regularly.